Claude Opus 4.6 Released! Key Improvements from Opus 4.5 from an Engineer's Perspective

Table of Contents

Hello. On February 5, 2026 (February 6, Japan Time), Anthropic released the latest Claude model, "Claude Opus 4.6."

As someone who regularly uses Claude as a development companion, a new model release is always exciting. To put it simply, this update is packed with "evolutions that engineers will truly appreciate."

In this article, based on the official announcement, I would like to organize the features of Opus 4.6 and the changes from Opus 4.5 from an engineer's perspective.

What is Claude Opus in the first place?

As many of you may know, Claude has three model lineups: Haiku, Sonnet, and Opus. Opus is positioned as the highest-performance "flagship model" (the top-tier, premier model).

While the previous model, Opus 4.5, was already exceptionally capable, this new 4.6 represents an even larger step forward.

Key Evolution Points of Opus 4.6

1. Significant Enhancement of Coding Capabilities

For engineers, this is undoubtedly the most interesting part.

Opus 4.6 has recorded the highest score on the agentic coding benchmark "Terminal-Bench 2.0." This benchmark evaluates more practical coding tasks, including actual terminal operations.

Specifically, the following points have been improved:

- Improved Planning - It now creates more careful plans before starting a task.

- Persistence in Long-running Agent Tasks - It maintains focus even during long sessions.

- Reliability in Large Codebases - It operates stably even in massive repositories.

- Code Review and Debugging Capabilities - Improved ability to find and fix its own mistakes.

Anthropic themselves mention that they are "building Claude with Claude," and their internal engineers use Claude Code for development every day. A model refined by the developers using it themselves is quite persuasive.

2. 1-Million Token Context Window (Beta)

This is a first for an Opus-class model and carries a significant impact.

To give you an idea of the context window size, Opus 4.5 was at 200K tokens. This has jumped all the way to 1 million tokens (beta).

The issue known as "context rot," where model performance degrades as conversations get longer, has also been significantly improved. Looking at specific numbers, in the MRCR v2 (8-needle 1M variant) long-context benchmark, where Sonnet 4.5 scores 18.5%, Opus 4.6 marks 76%. This is an improvement on a completely different level.

This difference is likely to be effective in use cases such as loading an entire large codebase at once or analyzing massive logs.

3. 128K Token Output

The output token count now supports up to 128K tokens. This allows for the generation of large files or long code outputs to be completed in a single pass without having to split them into multiple requests.

It might seem minor, but in agentic use cases, "not cutting off in the middle" is a very important point.

4. Adaptive Thinking

In previous versions of Claude, you had to choose between turning extended thinking ON or OFF.

Opus 4.6 introduces "Adaptive Thinking," where the model itself judges when it "should think deeply about this" and switches extended thinking on or off as needed.

In other words, it behaves more human-like, answering simple questions quickly and taking its time to think through complex problems.

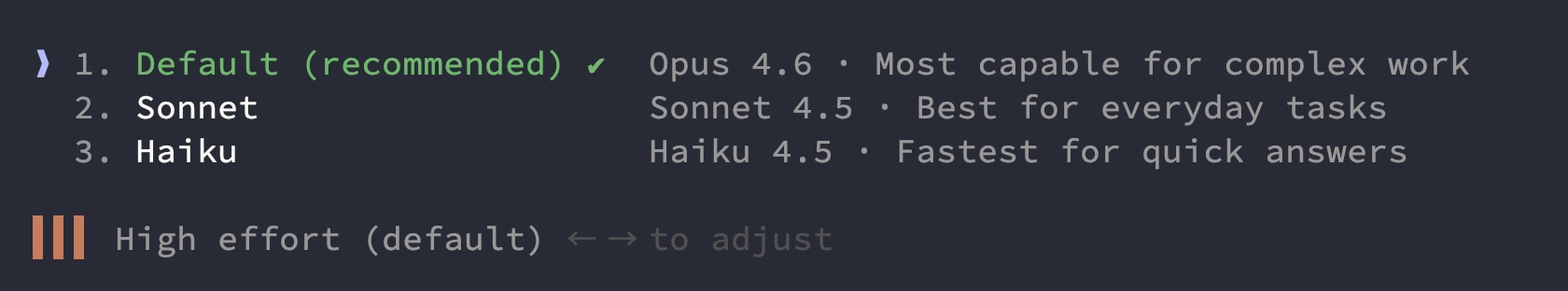

5. Effort Control

As a new feature that can be used in combination with Adaptive Thinking, four levels of effort have been introduced:

- low - For light tasks, fast response

- medium - Balanced type

- high (default) - Uses extended thinking as needed

- max - Demonstrates maximum reasoning power

As a side note, the API's effort parameter itself can be used with Opus 4.5 (though max is only for Opus 4.6; Opus 4.5 has three levels: low/medium/high).

Official advice suggests: "Since Opus 4.6 tends to think more deeply about difficult problems, if you are concerned about overthinking on simple tasks, lowering it to medium is recommended."

From a cost management perspective, being able to switch effort levels depending on the task is a welcome feature.

For those using Claude Code, I think it's fastest to start tinkering here. With Opus selected via the /model command, you can adjust the level using the left and right keys for the displayed effort item (high is the default).

When using the API, you can control this with the /effort parameter.

6. Context Compaction (Beta)

When performing long-running agent tasks, there was always the issue of hitting the context window limit.

Context Compaction is a mechanism that, when the context approaches its limit, summarizes or replaces old content to make it easier to continue long tasks.

In Claude Code, context management was already handled quite well, automatically compacting by "clearing old tool output first → summarizing the conversation if necessary" when the limit approached (there is also a manual /compact). So, from a Claude Code user's perspective, I believe this mechanism has now been provided on the API side as server-side compaction (beta).

Opus 4.5 vs Opus 4.6 Comparison Table

I've summarized the specs that engineers care about in a table.

| Item | Opus 4.5 | Opus 4.6 |

|---|---|---|

| Context Window | 200K tokens | 200K (Standard) + 1M (Beta) |

| Max Output Tokens | 64K tokens | 128K tokens |

| Adaptive Thinking | None | Available (Opus 4.6 only) |

| Effort Control | 3 levels (low/medium/high) | 4 levels (low/medium/high/max) |

| Compaction | context editing, etc. (client-side) | server-side compaction (API: Beta) |

| Pricing (Input/Output) | $5/$25 per 1M tokens | $5/$25 per 1M tokens (Unchanged) |

| Long Context Performance (MRCR v2) | - | 76% (Sonnet 4.5 is 18.5%) |

*Source (Official): Introducing Claude Opus 4.5 / Models overview / Effort / Adaptive thinking / Compaction / What’s new in Claude 4.6

The original article explicitly states, "Pricing remains the same at $5/$25 per million tokens." It's great for users that there is no price increase despite such performance improvements.

However, keep in mind that a premium rate ($10/$37.50 per 1M tokens) applies to prompts exceeding 200K tokens.

Performance Seen Through Benchmarks

The evolution becomes even clearer when looking at the numbers.

- Terminal-Bench 2.0 (Agentic Coding) - Highest score in the industry

- Humanity's Last Exam (Complex Reasoning Test) - Top among all frontier models

- BrowseComp (Information Retrieval Capability) - Industry-leading search performance

- GDPval-AA (Knowledge Work Tasks) - Outperforms GPT-5.2 by approximately 144 Elo points and Opus 4.5 by 190 points

- OpenRCA (Root Cause Analysis) - Improved ability to diagnose complex software failures

- CyberGym (Cybersecurity) - Excels at discovering vulnerabilities in actual codebases

Of particular note for engineers is the improvement in Root Cause Analysis (RCA) and cybersecurity capabilities. It looks set to become a more reliable partner in incident response and security reviews.

New Feature in Claude Code: Agent Teams

As a highlight feature for developers, "Agent Teams" has been added to Claude Code as a research preview.

This feature allows you to launch multiple Claude Code instances in parallel and have them work cooperatively as a team. One session acts as the "Team Lead" to coordinate the whole, while other members (Teammates) work in their own independent context windows.

You might wonder how this differs from traditional subagents. While a subagent is an "auxiliary worker operating within the main session" that simply returns results to the main session, the key feature of Agent Teams is that team members can exchange messages directly with each other. They use a shared task list to autonomously distribute work, enabling more complex collaboration.

Enabling Agent Teams

Since Agent Teams is currently an experimental feature, it is disabled by default. To use it, add the following to your Claude Code configuration file (such as ~/.claude/settings.json or a project-specific .claude/settings.local.json):

{

"env": {

"CLAUDE_CODE_EXPERIMENTAL_AGENT_TEAMS": "1"

}

}

Setting it as a shell environment variable with export CLAUDE_CODE_EXPERIMENTAL_AGENT_TEAMS=1 is also fine.

How to Start a Team

Once enabled, simply tell Claude the team structure and the work to be done in natural language, and the team will be formed automatically. For example:

Create an agent team to review PR #142. Spawn three reviewers:

- One focused on security implications

- One checking performance impact

- One validating test coverage

Have them each review and report findings.

If you were to give instructions in Japanese, it would look like this:

PR #142 をレビューするエージェントチームを作って。レビュアーを3人生成して:

- 1人目はセキュリティの観点

- 2人目はパフォーマンスへの影響

- 3人目はテストカバレッジの検証

それぞれレビューして結果を報告してもらって。

With just this, Claude acts as the lead to generate three reviewers, assigns roles to each, and integrates the review results.

It is also suitable for more exploratory tasks. For example, the official documentation introduces use cases like "Consider the design of a CLI tool from three perspectives: UX lead, Architect, and Devil's Advocate."

Selecting Display Modes

Agent Teams has two display modes:

- In-process mode - All teammates operate within the main terminal, and you can switch between members and send direct messages using

Shift+Up/Down. No additional setup is required. - Split panes mode - Using tmux or iTerm2, each teammate is displayed in an independent pane. You can check everyone's output simultaneously and intervene directly with a click.

The default is "auto", which uses Split panes if running within a tmux session and In-process otherwise. If you want to specify it explicitly, set it in settings.json.

{

"teammateMode": "tmux"

}

It is also possible to specify it with a flag at startup, like claude --teammate-mode in-process.

Controlling the Team

Operations related to team management can also be performed in natural language.

- Task Assignment - The lead manages a shared task list, and members autonomously pick up tasks and work on them. Tasks with dependencies are automatically blocked, so you don't need to worry about the order.

- Delegate Mode - Switching with

Shift+Tabputs the lead into a mode where they focus on orchestration without touching the code. - Direct Intervention - In In-process mode, select a member with

Shift+Up/Down, or in Split panes mode, click a pane to give additional instructions or questions to individual members. - Plan Approval - If you instruct them to "let me check the plan before implementation," you can create a flow where members create a plan in a read-only plan mode, get approval from the lead, and then move to implementation.

Suitable Use Cases

Agent Teams is particularly effective in cases such as:

- Research and Review - Parallel execution of PR reviews from perspectives like security, performance, and test coverage.

- Investigation with Competing Hypotheses - Simultaneously verifying multiple hypotheses for the cause of a bug, with members discussing to reach a conclusion.

- Parallel Implementation of New Features - Different members handling frontend, backend, and testing respectively.

- Cross-layer Changes - Dividing changes that span multiple layers among respective leads.

Conversely, for tasks that require sequential processing, editing the same file, or tasks with many dependencies, a traditional single session or subagent may be more effective. Since Agent Teams involves each member being an independent Claude Code instance, be mindful that token consumption will also increase.

Notes and Limitations

As it is still an experimental feature, there are some limitations.

- In-process mode members are not restored when resuming a session (new members must be regenerated).

- Only one team per session. Nested teams (members creating further teams) are not allowed.

- If two members edit the same file, overwriting will occur, so it is important to clearly divide file responsibilities.

That said, the development experience of a human supervising a lead while AI works cooperatively in a team feels quite futuristic. It seems best to start with read-centric tasks like code reviews or research.

About Safety

As capabilities increase, safety becomes a concern, but Anthropic has addressed this thoroughly.

Opus 4.6 has a low incidence rate of "misaligned behavior" such as deceptiveness, sycophancy, or cooperation in misuse, maintaining safety levels equal to or better than the previous Opus 4.5. Furthermore, the rate of "over-refusal"—excessively rejecting legitimate queries—is said to be the lowest among recent Claude models.

As cybersecurity capabilities have improved, six new probes (detection methods) have been added to prevent misuse. It's reassuring to see they are conscious of the balance between capability and safety.

Evaluations from Partner Companies

The official announcement included comments from various Early Access partners. I've picked out a few that were particularly interesting from an engineer's perspective.

Claude Opus 4.6 is the new frontier on long-running tasks from our internal benchmarks and testing. It's also been highly effective at reviewing code. — Michael Truell, Co-founder & CEO, Cursor

The CEO of Cursor calling it the "new frontier for long-running tasks" and "highly effective at reviewing code" is a welcome comment for developers who use Cursor daily.

Across 40 cybersecurity investigations, Claude Opus 4.6 produced the best results 38 of 40 times in a blind ranking against Claude 4.5 models. Each model ran end to end on the same agentic harness with up to 9 subagents and 100+ tool calls. — Stian Kirkeberg, Head of AI & ML, NBIM

Winning a blind test in 38 out of 40 cases is overwhelming. A test involving 9 subagents and over 100 tool calls is a reliable evaluation close to actual operation.

Claude Opus 4.6 autonomously closed 13 issues and assigned 12 issues to the right team members in a single day, managing a ~50-person organization across 6 repositories. — Yusuke Kaji, General Manager, AI, Rakuten

The comment from Rakuten is also impressive. The story of autonomously closing 13 issues and assigning 12 to appropriate team members in a single day makes one feel the potential of AI-driven project management.

Things I'm Personally Interested In

Here are a few of my personal thoughts.

In the evolution of Opus 4.6, I'm most interested in the "improvement in planning" and "persistence in long-running tasks."

Previously, when I entrusted a somewhat complex task to Claude Code, it would sometimes lose its way or forget what it had done previously (though this improved to a point where it wasn't noticeable in Opus 4.5). I want to see how much the "focus that lasts even in long tasks" and the improvement in long-context performance (measures against so-called context rot) actually help in a real development flow.

Also, the introduction of effort control is significant from a cost optimization perspective. By using different effort levels depending on the type of task, it seems possible to keep costs down while maintaining quality.

Summary

Claude Opus 4.6 was an update that makes engineers want to say, "This is what I've been waiting for."

- Significant improvement in coding and debugging capabilities

- 1-million token context window

- Flexible usage through Adaptive Thinking and effort control

- Stability in long-running tasks through Context Compaction

- Parallel work via Agent Teams (Claude Code)

- Price remains unchanged despite performance improvements

From "having AI write code" to "developing with an AI team." Opus 4.6 looks set to be a model that further accelerates that trend.

If you haven't tried it yet, you can test its capabilities immediately via claude.ai, the API, or Cursor. You'll surely feel, "Ah, this is different."